OpenAI’s Codex app launched with a feature called Automations—agentic workflows that run on a schedule.

https://openai.com/index/introducing-the-codex-app

After two days of testing, I wanted to understand how they actually work.

https://developers.openai.com/codex/app/automations

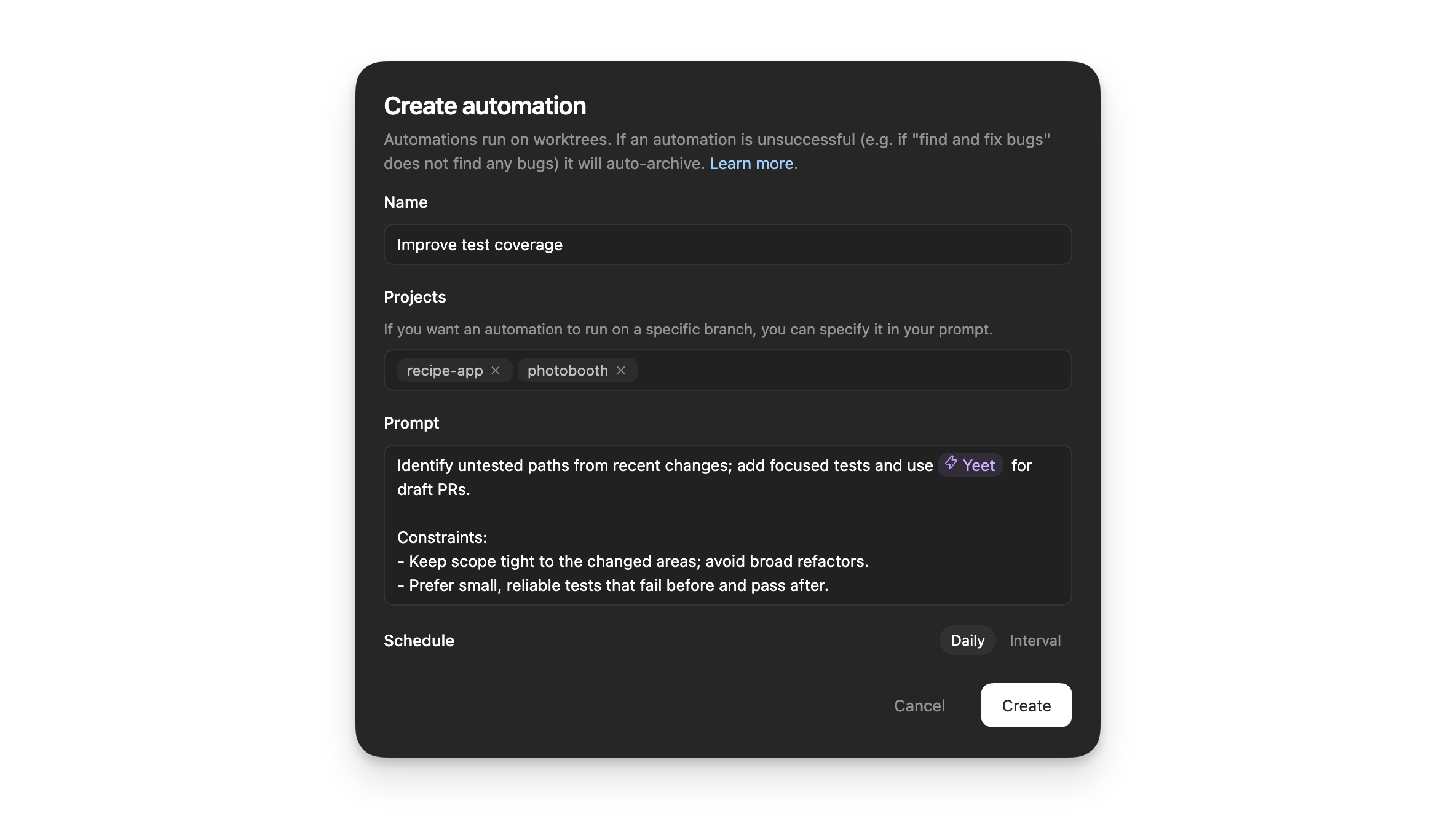

Delegate repetitive work with Automations

Automations run in the background on a schedule you define.

With the Codex app, you can also set up Automations that let Codex work in the background on an automatic schedule

Codex App Automations Demo

This matters because agentic workflows allow AI agents to run in the background on a schedule.

The Evolution

Before this, we had agentic workflows driven by graph-based systems predefined up-front.

We’ve come a long way from traditional workflow engines like N8N, Make, and Zapier.

We then moved toward newer agentic-friendly workflow engines like LangGraph, PydanticAI Graphs, and crewAI Workflows.

While these solutions worked, they were too rigid for evolving business landscapes.

New Workflow Primitives

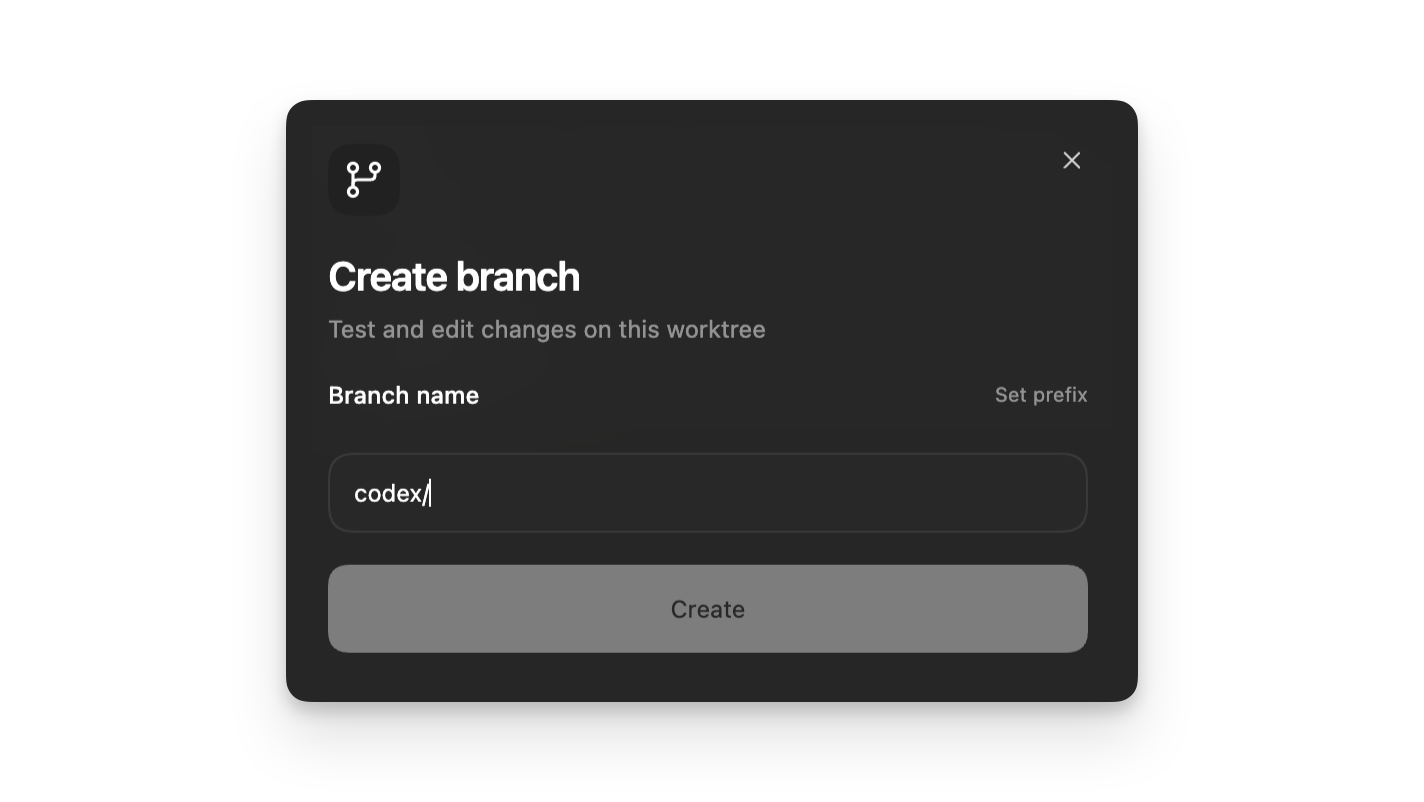

We now have a new primitive: AI agents + git commands.

Automations combine instructions with optional skills, running on a schedule you define. When an Automation finishes, the results land in a review queue so you can jump back in and continue working if needed.

OpenAI uses this technique internally:

At OpenAI, we’ve been using Automations to handle the repetitive but important tasks, like daily issue triage, finding and summarizing CI failures, generating daily release briefs, checking for bugs, and more.

They’re building cloud-based triggers:

We’re also building out Automations with support for cloud-based triggers, so Codex can run continuously in the background—not just when your computer is open.

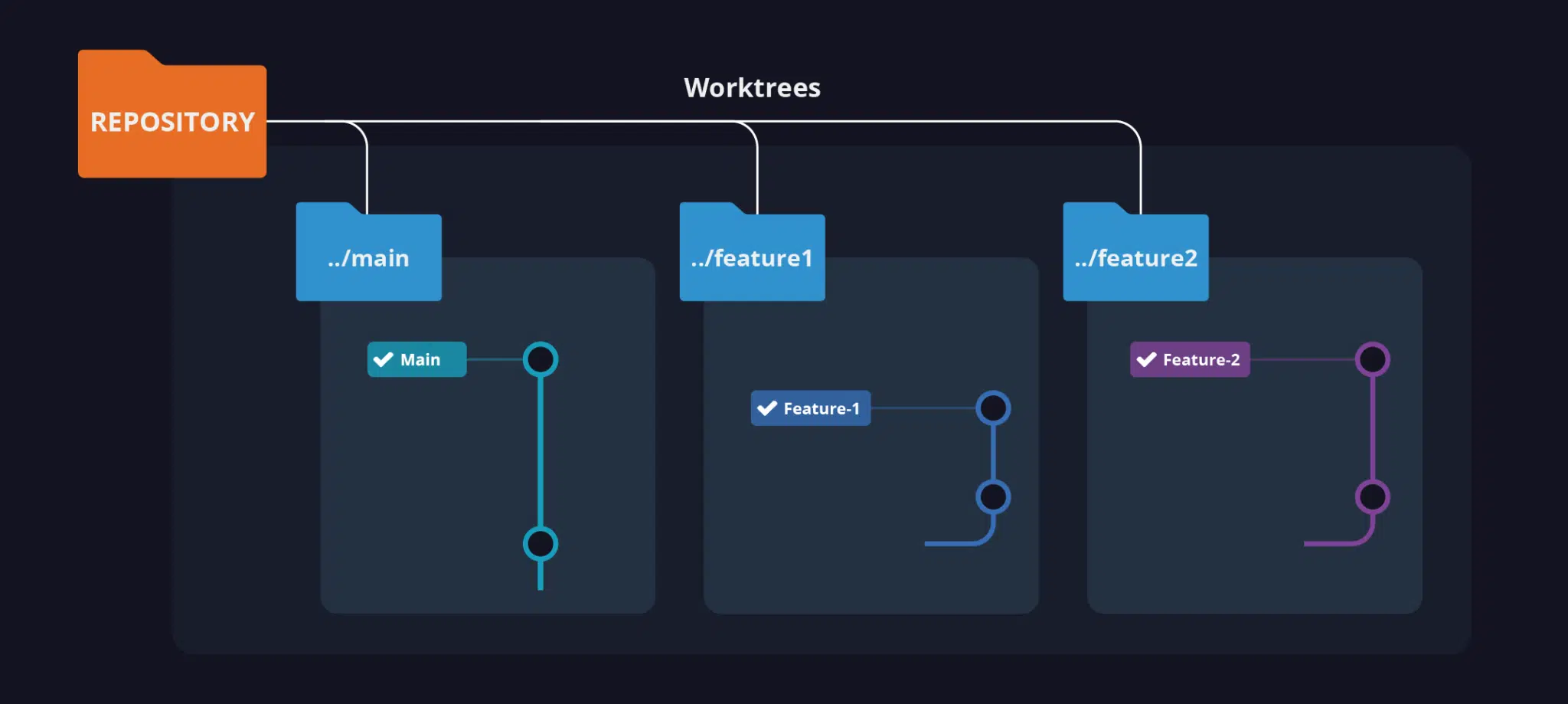

Git Worktrees

The technical implementation uses git worktrees:

In Git repositories, each automation run starts in a new worktree so it doesn’t interfere with your main checkout. In non-version-controlled projects, automations run directly in the project directory.

When an automation runs in a Git repository, Codex uses a dedicated background worktree. In non-version-controlled projects, automations run directly in the project directory. Consider using Git to enable running on background worktrees. You can have the same automation run on multiple projects.

Since most projects require software development best practices, most projects require git-based source code management.

Example: Automated Bug Fixes

OpenAI shares a concrete example combining Skills and Automations:

Trigger it with:

Check my commits from the last 24h and submit a $recent-code-bugfix

Understanding Git Worktrees

Git worktrees let you work on multiple branches simultaneously. Each branch lives in its own folder. Switch branches by changing directories.

Key commands:

OpenAI abstracts this complexity away from users.

Why Worktrees Matter

Work in parallel with Codex without breaking each other as you work

Worktrees enable async execution. Your automation runs in isolation while you continue working in your main checkout. This is exactly what AI agents unlock—async execution where each agent runs in parallel without interference.