Coding Benchmark Snapshot

Terminal-Bench 2.0 · SWE-Bench Verified · SWE-Bench Pro (Public)

| Model | Terminal-Bench 2.0 | SWE-Bench Verified | SWE-Bench Pro (Public) |

|---|---|---|---|

| Gemini 3.1 Pro (High) | 68.5% | 80.6% | 54.2% |

| Gemini 3 Pro (High) | 56.9% | 76.2% | 43.3% |

| Sonnet 4.6 (Max) | 59.1% | 79.6% | – |

| Opus 4.6 (Max) | 65.4% | 80.8% | – |

| GPT-5.2 | 54.0% | 80.0% | 55.6% |

| GPT-5.2 (xhigh) | 62.2% | – | – |

| GPT-5.3-Codex | 64.7% | – | – |

| GPT-5.3-Codex (xhigh) | 77.3% | – | 56.8% |

Source: https://blog.google/innovation-and-ai/models-and-research/gemini-models/gemini-3-1-pro

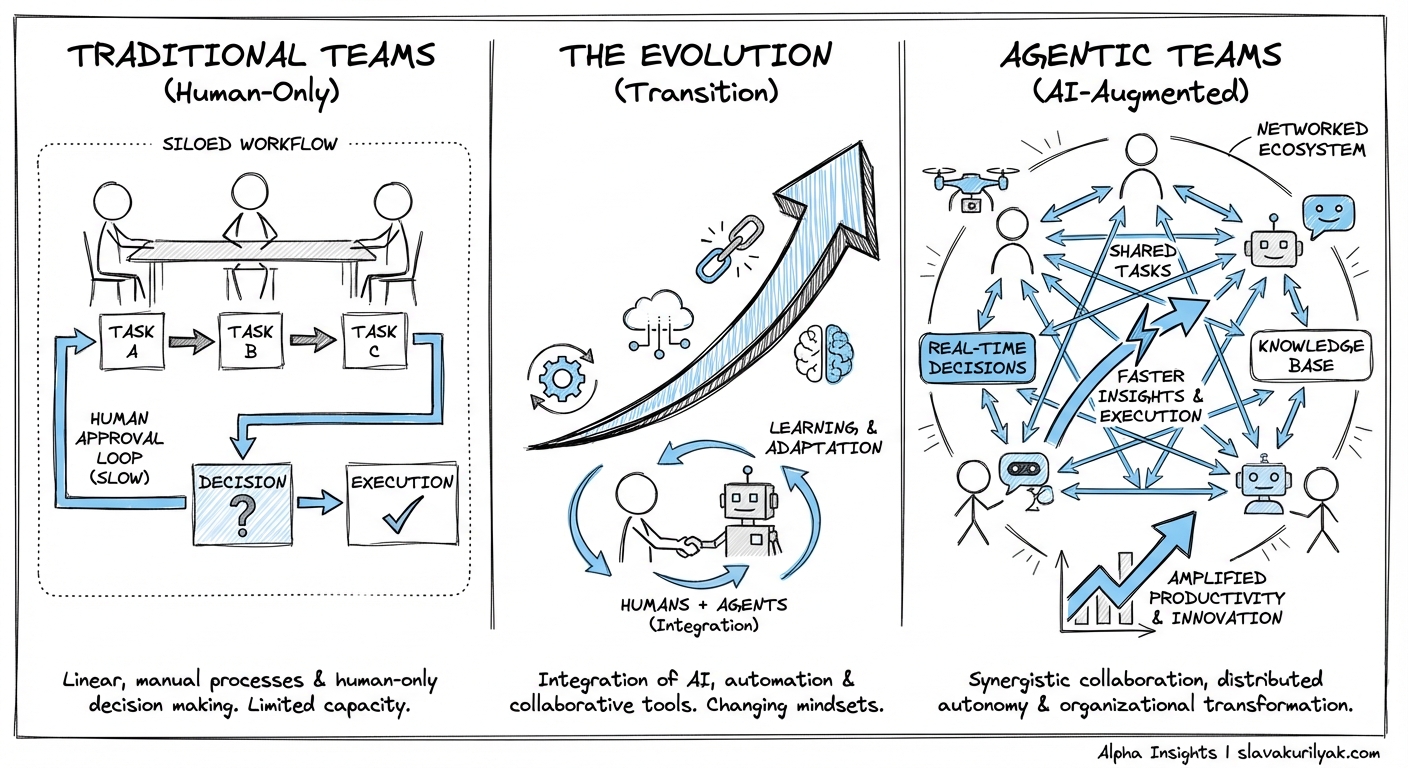

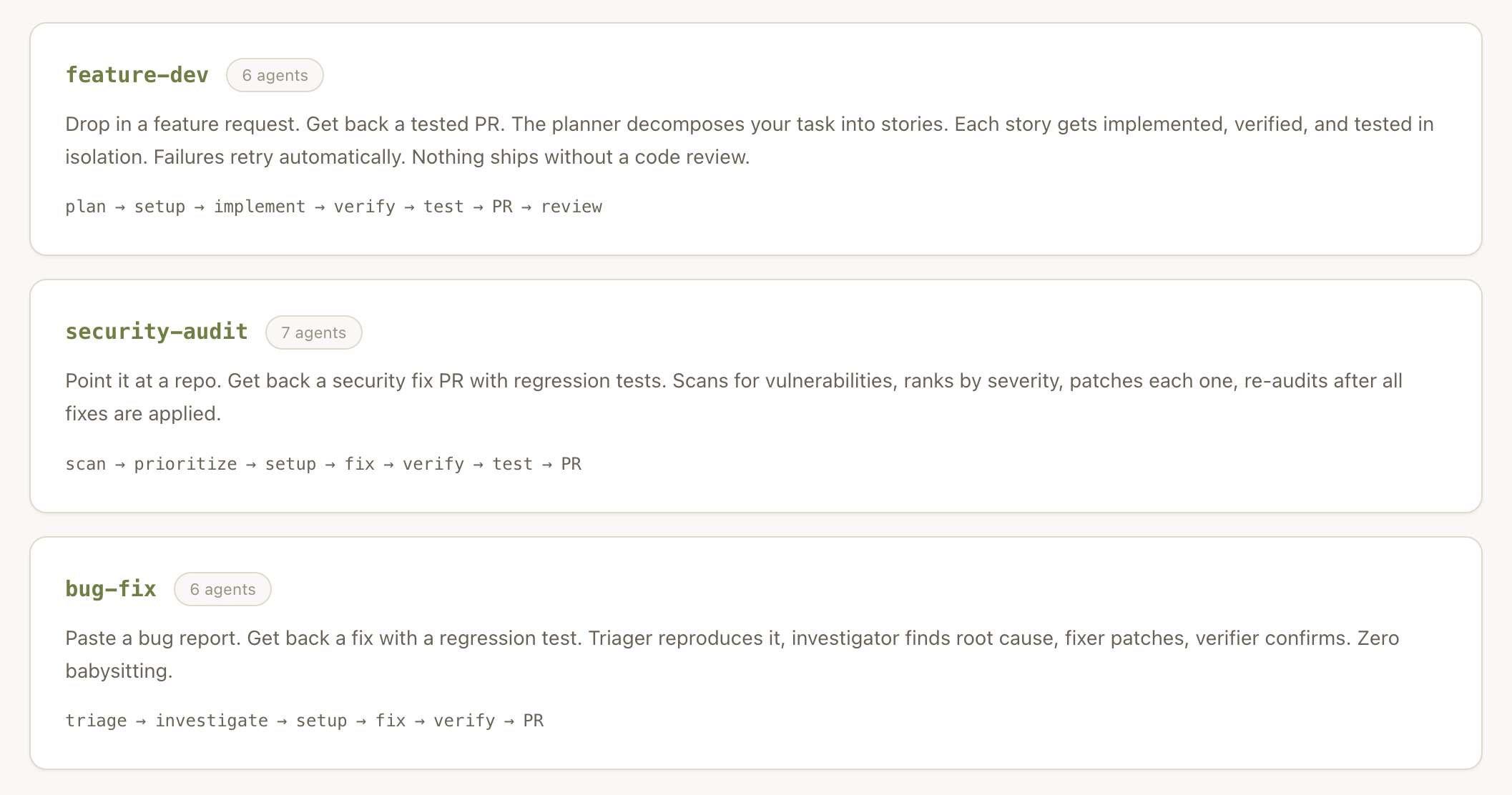

We are now living in the agentic age of organizational growth

AI agents, or just agents, are helping

Last year, Microsoft published the Work Trend Index Annual Report in which they defined ‘hybrid’ teams of humans + agents

I read that paper but did not think much of it untill I started to reflect on the progress of multi-agent systems like claude code and OpenClaw

Here is the latest attempt by Anthropic as of Feb 5th

Claude Code now supports agent teams (in research preview)

— Lydia Hallie ✨ (@lydiahallie) February 5, 2026

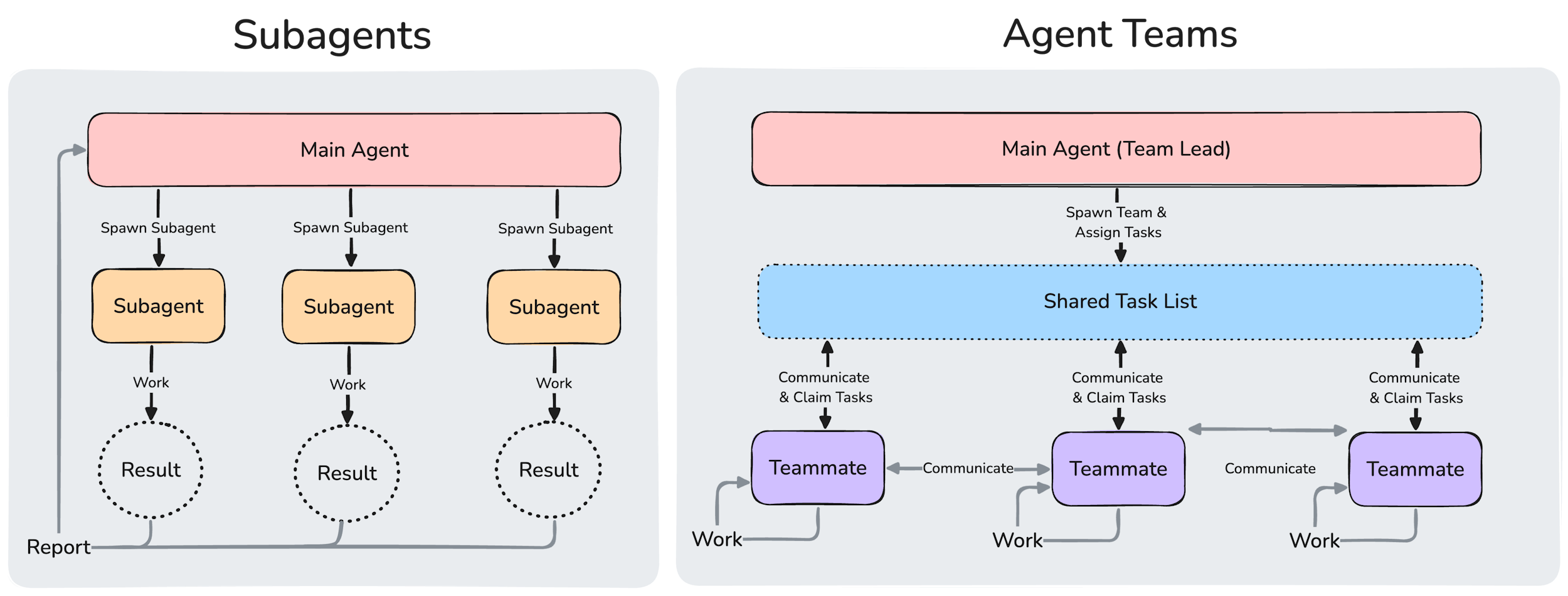

Instead of a single agent working through a task sequentially, a lead agent can delegate to multiple teammates that work in parallel to research, debug, and build while coordinating with each other.

Try it out today by… pic.twitter.com/vi7lUJDOTi

According to Anthropic’s agent teams docs:

Coordinate multiple Claude Code instances working together as a team, with shared tasks, inter-agent messaging, and centralized management.

So what happend? We went from using one AI agent at a time to using multi-agent systems

This is possible thanks to agentic orchestration

Agents coordinating other agents?

This is one of the unlocks of OpenClaw

OpenClaw unlocks a unique improvement over traditional agent runtimes by adding a gateway.

In OpenClaw, heartbeats are Markdown files and cron jobs are JSON files.

Git worktrees are used by OpenAI Codex in the Codex macOS app, but the idea of cron-like inspired automation is similar to what I covered in agentic automation.

Note, OpenClaw by itself sets up one AI agent to run for you

We need a better agentic harness or orchestrator like antfarm by Ryan Carson

If you’re using @openclaw this will be a big unlock.

— Ryan Carson (@ryancarson) February 9, 2026

Antfarm is a batteries-included agent team that operates reliably and deterministically.

Works with OpenClaw using just crons, YAML and SQLite.

It auto-runs Ralph loops after creating atomic user stories.

I open sourced it… https://t.co/g6a1N0jwel

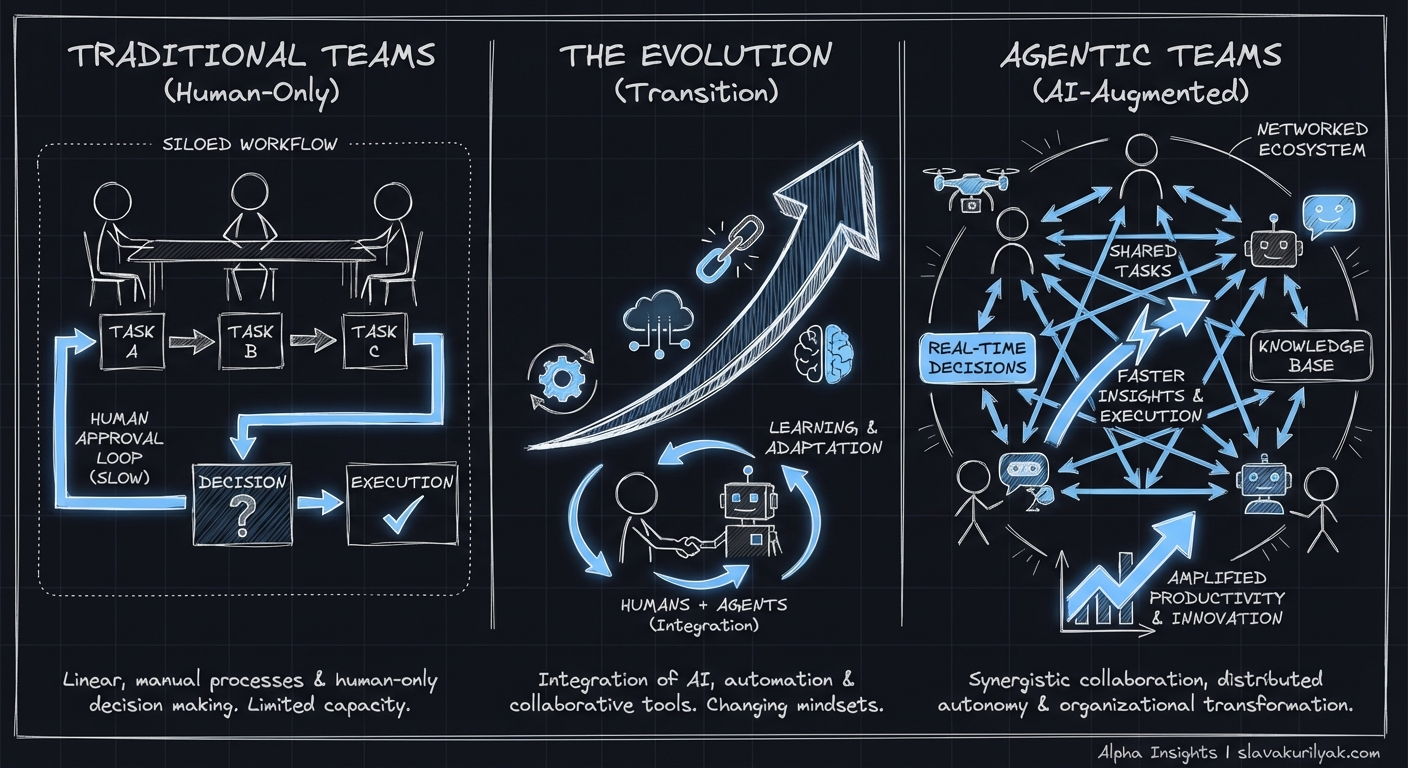

When you spin up your first antfarm, you get 6 agents

Now we are gettting clower to a team!

Let’s discuss the architecture of this new agentic team inspired by antfarm

antfarm combines crons (cron jobs), with YAML and SQLite.

The code execution step as part of each workflow is built around a concept known as the ‘Ralph Wiggum Loop,’ after the simpleton character from ‘The Simpsons’

‘Ralph’ functions as an autonomous coding loop and shares the same workflow as a human developer

That means Ralph consults a list of tasks to be completed, implements them, runs tests, commits the code, marks the task as completed, logs what it learned from the process, and then selects the next task from the list.

Ralph picks one story then executes it

How does it work?

A human says ‘OpenClaw Team, I want to build this new feature.’ Then a specialized agent ‘interviews’ the human about specifics the AI team needs to know. Then, a different agent turns those instructions into a prioritized list of tasks. Different agents complete the actual coding, while additional category experts check and verify their work.

Spec’ing is done via spec-driven development (SDD)

Maybe we should treat agentic teams as humans + RPG (role playing game) agents

According to https://www.antfarm.cool

Built on the Ralph loop

Each agent runs in a fresh session with clean context. Memory persists through git history and progress files — the same autonomous loop pattern from Ralph, scaled to multi-agent workflows.

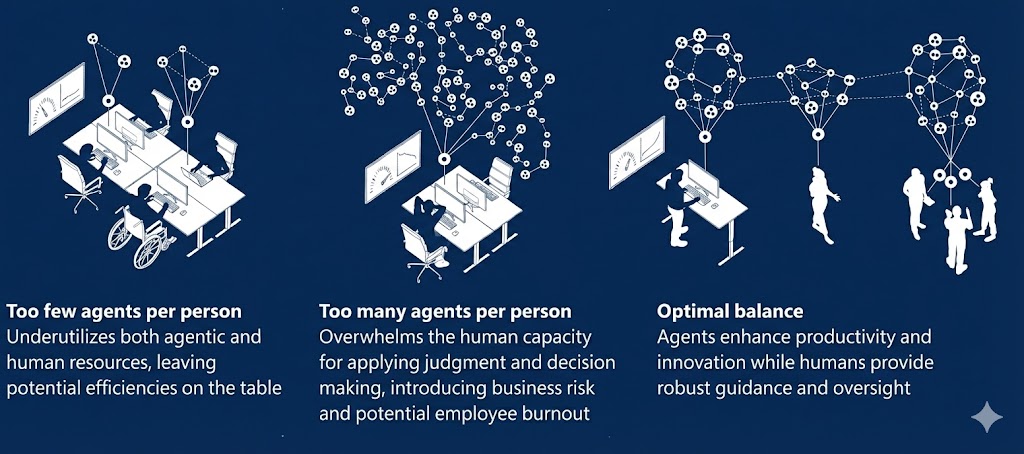

Microsoft team recognized that it is possible to get overwhelmed by too many AI agents

High-Level Architecture

Agent Lifecycle (Ralph Loop Model)

Here is how antfarm works

Multi-Agent Story Isolation (feature-dev example)

Custom Workflow Definition (YAML → Runtime)

End-to-End Example Run

Why Antfarm Works

Instead of:

‘One big AI agent doing everything’

It becomes:

‘A deterministic assembly line of specialized agents’

Let’s evaluate how Anthropic’s agent teams work

One way to understand it is by contrasting against subagents

Claude Agent Teams, Taught Incrementally

Step 1: One agent, one thread

Start simple: one agent handles everything in sequence.

Step 2: Add a lead and specialists

The lead delegates. Teammates run in parallel.

Step 3: Share tasks and messages

Agent teams add a shared task list and direct teammate messaging.

Step 4: Run the claim-and-complete loop

Each teammate claims unblocked work, executes, and updates status.

Step 5: Add quality gates and cleanup

Hooks enforce standards before tasks close; lead shuts down the team.

This is the shift: from one big agent to a coordinated team with explicit roles, shared state, and controlled handoffs.

Step 6: Gate the feature explicitly

Agent teams stay opt-in behind an environment flag.

Step 7: Use local team state files

Coordination is driven by local frontmatter state, not hidden magic.

Step 8: Notify the lead through tmux

Idle teammates can report back through a coordinator session.

Step 9: Launch swarm from interactive command

The command gathers constraints first, then writes shared tasks.

This is the practical model: explicit flag, explicit files, explicit hooks, explicit task generation.